DAY7 基于线性回归模型的协同过滤推荐-baseline

1 Model-Based 协同过滤算法

随着机器学习技术的逐渐发展与完善,推荐系统也逐渐运用机器学习的思想来进行推荐。将机器学习应用到推荐系统中的方案真是不胜枚举。以下对Model-Based CF算法做一个大致的分类:

- 基于分类算法、回归算法、聚类算法

- 基于矩阵分解的推荐

- 基于神经网络算法

- 基于图模型算法

接下来我们重点学习以下几种应用较多的方案:

- 基于K最近邻的协同过滤推荐

- 基于回归模型的协同过滤推荐

- 基于矩阵分解的协同过滤推荐

2 基于K最近邻的协同过滤推荐

基于K最近邻的协同过滤推荐其实本质上就是MemoryBased CF,只不过在选取近邻的时候,加上K最近邻的限制。

这里我们直接根据MemoryBased CF的代码实现

修改以下地方

class CollaborativeFiltering(object):based = Nonedef __init__(self, k=40, rules=None, use_cache=False, standard=None):''':param k: 取K个最近邻来进行预测:param rules: 过滤规则,四选一,否则将抛异常:"unhot", "rated", ["unhot","rated"], None:param use_cache: 相似度计算结果是否开启缓存:param standard: 评分标准化方法,None表示不使用、mean表示均值中心化、zscore表示Z-Score标准化'''self.k = 40self.rules = rulesself.use_cache = use_cacheself.standard = standard

修改所有的选取近邻的地方的代码,根据相似度来选取K个最近邻

similar_users = self.similar[uid].drop([uid]).dropna().sort_values(ascending=False)[:self.k]similar_items = self.similar[iid].drop([iid]).dropna().sort_values(ascending=False)[:self.k]

但由于我们的原始数据较少,这里我们的KNN方法的效果会比纯粹的MemoryBasedCF要差

3 基于回归模型的协同过滤推荐

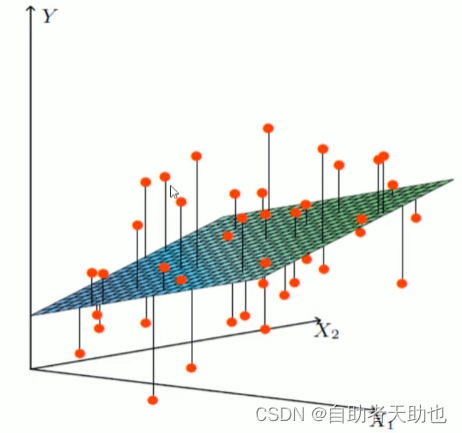

如果我们将评分看作是一个连续的值而不是离散的值,那么就可以借助线性回归思想来预测目标用户对某物品的评分。其中一种实现策略被称为Baseline(基准预测)。

3.1 Baseline:基准预测

Baseline设计思想基于以下的假设:

- 有些用户的评分普遍高于其他用户,有些用户的评分普遍低于其他用户。比如有些用户天生愿意给别人好评,心慈手软,比较好说话,而有的人就比较苛刻,总是评分不超过3分(5分满分)

- 一些物品的评分普遍高于其他物品,一些物品的评分普遍低于其他物品。比如一些物品一被生产便决定了它的地位,有的比较受人们欢迎,有的则被人嫌弃。

这个用户或物品普遍高于或低于平均值的差值,我们称为偏置(bias)

Baseline目标:

- 找出每个用户普遍高于或低于他人的偏置值bub_ubu

- 找出每件物品普遍高于或低于其他物品的偏置值bib_ibi

- 我们的目标也就转化为寻找最优的bub_ubu和bib_ibi

使用Baseline的算法思想预测评分的步骤如下:

-

计算所有电影的平均评分μ\muμ(即全局平均评分)

-

计算每个用户评分与平均评分μ\muμ的偏置值bub_ubu

-

计算每部电影所接受的评分与平均评分μ\muμ的偏置值bib_ibi

-

预测用户对电影的评分:

r^ui=bui=μ+bu+bi\hat{r}_{ui} = b_{ui} = \mu + b_u + b_i r^ui=bui=μ+bu+bi

举例:

比如我们想通过Baseline来预测用户A对电影“阿甘正传”的评分,那么首先计算出整个评分数据集的平均评分μ\muμ是3.5分;而用户A是一个比较苛刻的用户,他的评分比较严格,普遍比平均评分低0.5分,即用户A的偏置值bib_ibi是-0.5;而电影“阿甘正传”是一部比较热门而且备受好评的电影,它的评分普遍比平均评分要高1.2分,那么电影“阿甘正传”的偏置值bib_ibi是+1.2,因此就可以预测出用户A对电影“阿甘正传”的评分为:3.5+(−0.5)+1.23.5+(-0.5)+1.23.5+(−0.5)+1.2,也就是4.2分。

对于所有电影的平均评分μ\muμ是直接能计算出的,因此问题在于要测出每个用户的bub_ubu值和每部电影的bib_ibi的值。对于线性回归问题,我们可以利用平方差构建损失函数如下:

Cost=∑u,i∈R(rui−r^ui)2=∑u,i∈R(rui−μ−bu−bi)2\begin{split} Cost &= \sum_{u,i\in R}(r_{ui}-\hat{r}_{ui})^2 \\&=\sum_{u,i\in R}(r_{ui}-\mu-b_u-b_i)^2 \end{split} Cost=u,i∈R∑(rui−r^ui)2=u,i∈R∑(rui−μ−bu−bi)2

加入L2正则化:

Cost=∑u,i∈R(rui−μ−bu−bi)2+λ∗(∑ubu2+∑ibi2)Cost=\sum_{u,i\in R}(r_{ui}-\mu-b_u-b_i)^2 + \lambda*(\sum_u {b_u}^2 + \sum_i {b_i}^2) Cost=u,i∈R∑(rui−μ−bu−bi)2+λ∗(u∑bu2+i∑bi2)

公式解析:

- 公式第一部分$ \sum_{u,i\in R}(r_{ui}-\mu-b_u-b_i)^2是用来寻找与已知评分数据拟合最好的是用来寻找与已知评分数据拟合最好的是用来寻找与已知评分数据拟合最好的b_u和和和b_i$

- 公式第二部分λ∗(∑ubu2+∑ibi2)\lambda*(\sum_u {b_u}^2 + \sum_i {b_i}^2)λ∗(∑ubu2+∑ibi2)是正则化项,用于避免过拟合现象

对于最小过程的求解,我们一般采用随机梯度下降法或者交替最小二乘法来优化实现。

3.2 方法一:随机梯度下降法优化

使用随机梯度下降优化算法预测Baseline偏置值

- 计算出所有用户对所有物品的平均值

- 预测的评分 = 在平均值的基础上 + 用户评分偏置 + 物品的评分偏置

- 求解所有用户的评分偏置 和 所有物品的得分偏置

step 1:梯度下降法推导

损失函数:

J(θ)=Cost=f(bu,bi)J(θ)=∑u,i∈R(rui−μ−bu−bi)2+λ∗(∑ubu2+∑ibi2)\begin{split} &J(\theta)=Cost=f(b_u, b_i)\\ \\ &J(\theta)=\sum_{u,i\in R}(r_{ui}-\mu-b_u-b_i)^2 + \lambda*(\sum_u {b_u}^2 + \sum_i {b_i}^2) \end{split} J(θ)=Cost=f(bu,bi)J(θ)=u,i∈R∑(rui−μ−bu−bi)2+λ∗(u∑bu2+i∑bi2)

梯度下降参数更新原始公式:

θj:=θj−α∂∂θjJ(θ)\theta_j:=\theta_j-\alpha\cfrac{\partial }{\partial \theta_j}J(\theta) θj:=θj−α∂θj∂J(θ)

梯度下降更新bub_ubu:

损失函数偏导推导:

∂∂buJ(θ)=∂∂buf(bu,bi)=2∑u,i∈R(rui−μ−bu−bi)(−1)+2λbu=−2∑u,i∈R(rui−μ−bu−bi)+2λ∗bu\begin{split} \cfrac{\partial}{\partial b_u} J(\theta)&=\cfrac{\partial}{\partial b_u} f(b_u, b_i) \\&=2\sum_{u,i\in R}(r_{ui}-\mu-b_u-b_i)(-1) + 2\lambda{b_u} \\&=-2\sum_{u,i\in R}(r_{ui}-\mu-b_u-b_i) + 2\lambda*b_u \end{split} ∂bu∂J(θ)=∂bu∂f(bu,bi)=2u,i∈R∑(rui−μ−bu−bi)(−1)+2λbu=−2u,i∈R∑(rui−μ−bu−bi)+2λ∗bu

bub_ubu更新(因为alpha可以人为控制,所以2可以省略掉):

bu:=bu−α∗(−∑u,i∈R(rui−μ−bu−bi)+λ∗bu):=bu+α∗(∑u,i∈R(rui−μ−bu−bi)−λ∗bu)\begin{split} b_u&:=b_u - \alpha*(-\sum_{u,i\in R}(r_{ui}-\mu-b_u-b_i) + \lambda * b_u)\\ &:=b_u + \alpha*(\sum_{u,i\in R}(r_{ui}-\mu-b_u-b_i) - \lambda* b_u) \end{split} bu:=bu−α∗(−u,i∈R∑(rui−μ−bu−bi)+λ∗bu):=bu+α∗(u,i∈R∑(rui−μ−bu−bi)−λ∗bu)

同理可得,梯度下降更新bib_ibi:

bi:=bi+α∗(∑u,i∈R(rui−μ−bu−bi)−λ∗bi)b_i:=b_i + \alpha*(\sum_{u,i\in R}(r_{ui}-\mu-b_u-b_i) -\lambda*b_i) bi:=bi+α∗(u,i∈R∑(rui−μ−bu−bi)−λ∗bi)

step 2:随机梯度下降

由于随机梯度下降法本质上利用每个样本的损失来更新参数,而不用每次求出全部的损失和,因此使用SGD时:

单样本损失值:

error=rui−r^ui=rui−(μ+bu+bi)=rui−μ−bu−bi\begin{split} error &=r_{ui}-\hat{r}_{ui} \\&= r_{ui}-(\mu+b_u+b_i) \\&= r_{ui}-\mu-b_u-b_i \end{split} error=rui−r^ui=rui−(μ+bu+bi)=rui−μ−bu−bi

参数更新:

bu:=bu+α∗((rui−μ−bu−bi)−λ∗bu):=bu+α∗(error−λ∗bu)bi:=bi+α∗((rui−μ−bu−bi)−λ∗bi):=bi+α∗(error−λ∗bi)\begin{split} b_u&:=b_u + \alpha*((r_{ui}-\mu-b_u-b_i) -\lambda*b_u) \\ &:=b_u + \alpha*(error - \lambda*b_u) \\ \\ b_i&:=b_i + \alpha*((r_{ui}-\mu-b_u-b_i) -\lambda*b_i)\\ &:=b_i + \alpha*(error -\lambda*b_i) \end{split} bubi:=bu+α∗((rui−μ−bu−bi)−λ∗bu):=bu+α∗(error−λ∗bu):=bi+α∗((rui−μ−bu−bi)−λ∗bi):=bi+α∗(error−λ∗bi)

step 3:算法实现

import pandas as pd

import numpy as npclass BaselineCFBySGD(object):def __init__(self, number_epochs, alpha, reg, columns=["uid", "iid", "rating"]):# 梯度下降最高迭代次数self.number_epochs = number_epochs# 学习率self.alpha = alpha# 正则参数self.reg = reg# 数据集中user-item-rating字段的名称self.columns = columnsdef fit(self, dataset):''':param dataset: uid, iid, rating:return:'''self.dataset = dataset# 用户评分数据self.users_ratings = dataset.groupby(self.columns[0]).agg([list])[[self.columns[1], self.columns[2]]]# 物品评分数据self.items_ratings = dataset.groupby(self.columns[1]).agg([list])[[self.columns[0], self.columns[2]]]# 计算全局平均分self.global_mean = self.dataset[self.columns[2]].mean()# 调用sgd方法训练模型参数self.bu, self.bi = self.sgd()def sgd(self):'''利用随机梯度下降,优化bu,bi的值:return: bu, bi'''# 初始化bu、bi的值,全部设为0bu = dict(zip(self.users_ratings.index, np.zeros(len(self.users_ratings))))bi = dict(zip(self.items_ratings.index, np.zeros(len(self.items_ratings))))for i in range(self.number_epochs):print("iter%d" % i)for uid, iid, real_rating in self.dataset.itertuples(index=False):error = real_rating - (self.global_mean + bu[uid] + bi[iid])bu[uid] += self.alpha * (error - self.reg * bu[uid])bi[iid] += self.alpha * (error - self.reg * bi[iid])return bu, bidef predict(self, uid, iid):predict_rating = self.global_mean + self.bu[uid] + self.bi[iid]return predict_ratingif __name__ == '__main__':dtype = [("userId", np.int32), ("movieId", np.int32), ("rating", np.float32)]dataset = pd.read_csv("datasets/ml-latest-small/ratings.csv", usecols=range(3), dtype=dict(dtype))bcf = BaselineCFBySGD(20, 0.1, 0.1, ["userId", "movieId", "rating"])bcf.fit(dataset)while True:uid = int(input("uid: "))iid = int(input("iid: "))print(bcf.predict(uid, iid))

Step 4: 准确性指标评估

- 添加test方法,然后使用之前实现accuary方法计算准确性指标

import pandas as pd

import numpy as npdef data_split(data_path, x=0.8, random=False):'''切分数据集, 这里为了保证用户数量保持不变,将每个用户的评分数据按比例进行拆分:param data_path: 数据集路径:param x: 训练集的比例,如x=0.8,则0.2是测试集:param random: 是否随机切分,默认False:return: 用户-物品评分矩阵'''print("开始切分数据集...")# 设置要加载的数据字段的类型dtype = {"userId": np.int32, "movieId": np.int32, "rating": np.float32}# 加载数据,我们只用前三列数据,分别是用户ID,电影ID,已经用户对电影的对应评分ratings = pd.read_csv(data_path, dtype=dtype, usecols=range(3))testset_index = []# 为了保证每个用户在测试集和训练集都有数据,因此按userId聚合for uid in ratings.groupby("userId").any().index:user_rating_data = ratings.where(ratings["userId"]==uid).dropna()if random:# 因为不可变类型不能被 shuffle方法作用,所以需要强行转换为列表index = list(user_rating_data.index)np.random.shuffle(index) # 打乱列表_index = round(len(user_rating_data) * x)testset_index += list(index[_index:])else:# 将每个用户的x比例的数据作为训练集,剩余的作为测试集index = round(len(user_rating_data) * x)testset_index += list(user_rating_data.index.values[index:])testset = ratings.loc[testset_index]trainset = ratings.drop(testset_index)print("完成数据集切分...")return trainset, testsetdef accuray(predict_results, method="all"):'''准确性指标计算方法:param predict_results: 预测结果,类型为容器,每个元素是一个包含uid,iid,real_rating,pred_rating的序列:param method: 指标方法,类型为字符串,rmse或mae,否则返回两者rmse和mae:return:'''def rmse(predict_results):'''rmse评估指标:param predict_results::return: rmse'''length = 0_rmse_sum = 0for uid, iid, real_rating, pred_rating in predict_results:length += 1_rmse_sum += (pred_rating - real_rating) ** 2return round(np.sqrt(_rmse_sum / length), 4)def mae(predict_results):'''mae评估指标:param predict_results::return: mae'''length = 0_mae_sum = 0for uid, iid, real_rating, pred_rating in predict_results:length += 1_mae_sum += abs(pred_rating - real_rating)return round(_mae_sum / length, 4)def rmse_mae(predict_results):'''rmse和mae评估指标:param predict_results::return: rmse, mae'''length = 0_rmse_sum = 0_mae_sum = 0for uid, iid, real_rating, pred_rating in predict_results:length += 1_rmse_sum += (pred_rating - real_rating) ** 2_mae_sum += abs(pred_rating - real_rating)return round(np.sqrt(_rmse_sum / length), 4), round(_mae_sum / length, 4)if method.lower() == "rmse":rmse(predict_results)elif method.lower() == "mae":mae(predict_results)else:return rmse_mae(predict_results)class BaselineCFBySGD(object):def __init__(self, number_epochs, alpha, reg, columns=["uid", "iid", "rating"]):# 梯度下降最高迭代次数self.number_epochs = number_epochs# 学习率self.alpha = alpha# 正则参数self.reg = reg# 数据集中user-item-rating字段的名称self.columns = columnsdef fit(self, dataset):''':param dataset: uid, iid, rating:return:'''self.dataset = dataset# 用户评分数据self.users_ratings = dataset.groupby(self.columns[0]).agg([list])[[self.columns[1], self.columns[2]]]# 物品评分数据self.items_ratings = dataset.groupby(self.columns[1]).agg([list])[[self.columns[0], self.columns[2]]]# 计算全局平均分self.global_mean = self.dataset[self.columns[2]].mean()# 调用sgd方法训练模型参数self.bu, self.bi = self.sgd()def sgd(self):'''利用随机梯度下降,优化bu,bi的值:return: bu, bi'''# 初始化bu、bi的值,全部设为0bu = dict(zip(self.users_ratings.index, np.zeros(len(self.users_ratings))))bi = dict(zip(self.items_ratings.index, np.zeros(len(self.items_ratings))))for i in range(self.number_epochs):print("iter%d" % i)for uid, iid, real_rating in self.dataset.itertuples(index=False):error = real_rating - (self.global_mean + bu[uid] + bi[iid])bu[uid] += self.alpha * (error - self.reg * bu[uid])bi[iid] += self.alpha * (error - self.reg * bi[iid])return bu, bidef predict(self, uid, iid):'''评分预测'''if iid not in self.items_ratings.index:raise Exception("无法预测用户<{uid}>对电影<{iid}>的评分,因为训练集中缺失<{iid}>的数据".format(uid=uid, iid=iid))predict_rating = self.global_mean + self.bu[uid] + self.bi[iid]return predict_ratingdef test(self,testset):'''预测测试集数据'''for uid, iid, real_rating in testset.itertuples(index=False):try:pred_rating = self.predict(uid, iid)except Exception as e:print(e)else:yield uid, iid, real_rating, pred_ratingif __name__ == '__main__':trainset, testset = data_split("datasets/ml-latest-small/ratings.csv", random=True)bcf = BaselineCFBySGD(20, 0.1, 0.1, ["userId", "movieId", "rating"])bcf.fit(trainset)pred_results = bcf.test(testset)rmse, mae = accuray(pred_results)print("rmse: ", rmse, "mae: ", mae)3.3 方法二:交替最小二乘法优化

使用交替最小二乘法优化算法预测Baseline偏置值

step 1: 交替最小二乘法推导

最小二乘法和梯度下降法一样,可以用于求极值。

最小二乘法思想:对损失函数求偏导,然后再使偏导为0

同样,损失函数:

J(θ)=∑u,i∈R(rui−μ−bu−bi)2+λ∗(∑ubu2+∑ibi2)J(\theta)=\sum_{u,i\in R}(r_{ui}-\mu-b_u-b_i)^2 + \lambda*(\sum_u {b_u}^2 + \sum_i {b_i}^2) J(θ)=u,i∈R∑(rui−μ−bu−bi)2+λ∗(u∑bu2+i∑bi2)

对损失函数求偏导:

∂∂buf(bu,bi)=−2∑u,i∈R(rui−μ−bu−bi)+2λ∗bu\cfrac{\partial}{\partial b_u} f(b_u, b_i) =-2 \sum_{u,i\in R}(r_{ui}-\mu-b_u-b_i) + 2\lambda * b_u ∂bu∂f(bu,bi)=−2u,i∈R∑(rui−μ−bu−bi)+2λ∗bu

令偏导为0,则可得:

∑u,i∈R(rui−μ−bu−bi)=λ∗bu∑u,i∈R(rui−μ−bi)=∑u,i∈Rbu+λ∗bu\sum_{u,i\in R}(r_{ui}-\mu-b_u-b_i) = \lambda* b_u \\\sum_{u,i\in R}(r_{ui}-\mu-b_i) = \sum_{u,i\in R} b_u+\lambda * b_u u,i∈R∑(rui−μ−bu−bi)=λ∗buu,i∈R∑(rui−μ−bi)=u,i∈R∑bu+λ∗bu

为了简化公式,这里令∑u,i∈Rbu≈∣R(u)∣∗bu\sum_{u,i\in R} b_u \approx |R(u)|*b_u∑u,i∈Rbu≈∣R(u)∣∗bu,即直接假设每一项的偏置都相等,可得:

bu:=∑u,i∈R(rui−μ−bi)λ1+∣R(u)∣b_u := \cfrac {\sum_{u,i\in R}(r_{ui}-\mu-b_i)}{\lambda_1 + |R(u)|} bu:=λ1+∣R(u)∣∑u,i∈R(rui−μ−bi)

其中∣R(u)∣|R(u)|∣R(u)∣表示用户uuu的有过评分数量

同理可得:

bi:=∑u,i∈R(rui−μ−bu)λ2+∣R(i)∣b_i := \cfrac {\sum_{u,i\in R}(r_{ui}-\mu-b_u)}{\lambda_2 + |R(i)|} bi:=λ2+∣R(i)∣∑u,i∈R(rui−μ−bu)

其中∣R(i)∣|R(i)|∣R(i)∣表示物品iii收到的评分数量

bub_ubu和bib_ibi分别属于用户和物品的偏置,因此他们的正则参数可以分别设置两个独立的参数

step 2: 交替最小二乘法应用

通过最小二乘推导,我们最终分别得到了bub_ubu和bib_ibi的表达式,但他们的表达式中却又各自包含对方,因此这里我们将利用一种叫交替最小二乘的方法来计算他们的值:

- 计算其中一项,先固定其他未知参数,即看作其他未知参数为已知

- 如求bub_ubu时,将bib_ibi看作是已知;求bib_ibi时,将bub_ubu看作是已知;如此反复交替,不断更新二者的值,求得最终的结果。这就是交替最小二乘法(ALS)

step 3: 算法实现

import pandas as pd

import numpy as npclass BaselineCFByALS(object):def __init__(self, number_epochs, reg_bu, reg_bi, columns=["uid", "iid", "rating"]):# 梯度下降最高迭代次数self.number_epochs = number_epochs# bu的正则参数self.reg_bu = reg_bu# bi的正则参数self.reg_bi = reg_bi# 数据集中user-item-rating字段的名称self.columns = columnsdef fit(self, dataset):''':param dataset: uid, iid, rating:return:'''self.dataset = dataset# 用户评分数据self.users_ratings = dataset.groupby(self.columns[0]).agg([list])[[self.columns[1], self.columns[2]]]# 物品评分数据self.items_ratings = dataset.groupby(self.columns[1]).agg([list])[[self.columns[0], self.columns[2]]]# 计算全局平均分self.global_mean = self.dataset[self.columns[2]].mean()# 调用sgd方法训练模型参数self.bu, self.bi = self.als()def als(self):'''利用随机梯度下降,优化bu,bi的值:return: bu, bi'''# 初始化bu、bi的值,全部设为0bu = dict(zip(self.users_ratings.index, np.zeros(len(self.users_ratings))))bi = dict(zip(self.items_ratings.index, np.zeros(len(self.items_ratings))))for i in range(self.number_epochs):print("iter%d" % i)for iid, uids, ratings in self.items_ratings.itertuples(index=True):_sum = 0for uid, rating in zip(uids, ratings):_sum += rating - self.global_mean - bu[uid]bi[iid] = _sum / (self.reg_bi + len(uids))for uid, iids, ratings in self.users_ratings.itertuples(index=True):_sum = 0for iid, rating in zip(iids, ratings):_sum += rating - self.global_mean - bi[iid]bu[uid] = _sum / (self.reg_bu + len(iids))return bu, bidef predict(self, uid, iid):predict_rating = self.global_mean + self.bu[uid] + self.bi[iid]return predict_ratingif __name__ == '__main__':dtype = [("userId", np.int32), ("movieId", np.int32), ("rating", np.float32)]dataset = pd.read_csv("datasets/ml-latest-small/ratings.csv", usecols=range(3), dtype=dict(dtype))bcf = BaselineCFByALS(20, 25, 15, ["userId", "movieId", "rating"])bcf.fit(dataset)while True:uid = int(input("uid: "))iid = int(input("iid: "))print(bcf.predict(uid, iid))

Step 4: 准确性指标评估

import pandas as pd

import numpy as npdef data_split(data_path, x=0.8, random=False):'''切分数据集, 这里为了保证用户数量保持不变,将每个用户的评分数据按比例进行拆分:param data_path: 数据集路径:param x: 训练集的比例,如x=0.8,则0.2是测试集:param random: 是否随机切分,默认False:return: 用户-物品评分矩阵'''print("开始切分数据集...")# 设置要加载的数据字段的类型dtype = {"userId": np.int32, "movieId": np.int32, "rating": np.float32}# 加载数据,我们只用前三列数据,分别是用户ID,电影ID,已经用户对电影的对应评分ratings = pd.read_csv(data_path, dtype=dtype, usecols=range(3))testset_index = []# 为了保证每个用户在测试集和训练集都有数据,因此按userId聚合for uid in ratings.groupby("userId").any().index:user_rating_data = ratings.where(ratings["userId"]==uid).dropna()if random:# 因为不可变类型不能被 shuffle方法作用,所以需要强行转换为列表index = list(user_rating_data.index)np.random.shuffle(index) # 打乱列表_index = round(len(user_rating_data) * x)testset_index += list(index[_index:])else:# 将每个用户的x比例的数据作为训练集,剩余的作为测试集index = round(len(user_rating_data) * x)testset_index += list(user_rating_data.index.values[index:])testset = ratings.loc[testset_index]trainset = ratings.drop(testset_index)print("完成数据集切分...")return trainset, testsetdef accuray(predict_results, method="all"):'''准确性指标计算方法:param predict_results: 预测结果,类型为容器,每个元素是一个包含uid,iid,real_rating,pred_rating的序列:param method: 指标方法,类型为字符串,rmse或mae,否则返回两者rmse和mae:return:'''def rmse(predict_results):'''rmse评估指标:param predict_results::return: rmse'''length = 0_rmse_sum = 0for uid, iid, real_rating, pred_rating in predict_results:length += 1_rmse_sum += (pred_rating - real_rating) ** 2return round(np.sqrt(_rmse_sum / length), 4)def mae(predict_results):'''mae评估指标:param predict_results::return: mae'''length = 0_mae_sum = 0for uid, iid, real_rating, pred_rating in predict_results:length += 1_mae_sum += abs(pred_rating - real_rating)return round(_mae_sum / length, 4)def rmse_mae(predict_results):'''rmse和mae评估指标:param predict_results::return: rmse, mae'''length = 0_rmse_sum = 0_mae_sum = 0for uid, iid, real_rating, pred_rating in predict_results:length += 1_rmse_sum += (pred_rating - real_rating) ** 2_mae_sum += abs(pred_rating - real_rating)return round(np.sqrt(_rmse_sum / length), 4), round(_mae_sum / length, 4)if method.lower() == "rmse":rmse(predict_results)elif method.lower() == "mae":mae(predict_results)else:return rmse_mae(predict_results)class BaselineCFByALS(object):def __init__(self, number_epochs, reg_bu, reg_bi, columns=["uid", "iid", "rating"]):# 梯度下降最高迭代次数self.number_epochs = number_epochs# bu的正则参数self.reg_bu = reg_bu# bi的正则参数self.reg_bi = reg_bi# 数据集中user-item-rating字段的名称self.columns = columnsdef fit(self, dataset):''':param dataset: uid, iid, rating:return:'''self.dataset = dataset# 用户评分数据self.users_ratings = dataset.groupby(self.columns[0]).agg([list])[[self.columns[1], self.columns[2]]]# 物品评分数据self.items_ratings = dataset.groupby(self.columns[1]).agg([list])[[self.columns[0], self.columns[2]]]# 计算全局平均分self.global_mean = self.dataset[self.columns[2]].mean()# 调用sgd方法训练模型参数self.bu, self.bi = self.als()def als(self):'''利用随机梯度下降,优化bu,bi的值:return: bu, bi'''# 初始化bu、bi的值,全部设为0bu = dict(zip(self.users_ratings.index, np.zeros(len(self.users_ratings))))bi = dict(zip(self.items_ratings.index, np.zeros(len(self.items_ratings))))for i in range(self.number_epochs):print("iter%d" % i)for iid, uids, ratings in self.items_ratings.itertuples(index=True):_sum = 0for uid, rating in zip(uids, ratings):_sum += rating - self.global_mean - bu[uid]bi[iid] = _sum / (self.reg_bi + len(uids))for uid, iids, ratings in self.users_ratings.itertuples(index=True):_sum = 0for iid, rating in zip(iids, ratings):_sum += rating - self.global_mean - bi[iid]bu[uid] = _sum / (self.reg_bu + len(iids))return bu, bidef predict(self, uid, iid):'''评分预测'''if iid not in self.items_ratings.index:raise Exception("无法预测用户<{uid}>对电影<{iid}>的评分,因为训练集中缺失<{iid}>的数据".format(uid=uid, iid=iid))predict_rating = self.global_mean + self.bu[uid] + self.bi[iid]return predict_ratingdef test(self,testset):'''预测测试集数据'''for uid, iid, real_rating in testset.itertuples(index=False):try:pred_rating = self.predict(uid, iid)except Exception as e:print(e)else:yield uid, iid, real_rating, pred_ratingif __name__ == '__main__':trainset, testset = data_split("datasets/ml-latest-small/ratings.csv", random=True)bcf = BaselineCFByALS(20, 25, 15, ["userId", "movieId", "rating"])bcf.fit(trainset)pred_results = bcf.test(testset)rmse, mae = accuray(pred_results)print("rmse: ", rmse, "mae: ", mae)

函数求导:

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-RyVqrwdM-1674986540076)(/img/常见函数求导.png)]

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-UK0k9UCK-1674986540077)(/img/导数的四则运算.png)]